Tom Siegfried, editor in chief of Science News, was born in Ohio. He earned an undergraduate degree from Texas Christian University with majors in journalism, chemistry and history, and has a master of arts with a major in journalism and a minor in physics from the University of Texas at Austin.

|

The

Dice of Life

How probability rules the universe,

society and science

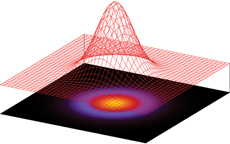

Science is often presented as the source of certain knowledge, a cosmic constitution that governs the behavior of physical phenomena and the processes of life. Which it does. But that constitution does not decree a cause-and-effect certainty of unbreakable laws. Rather it encompasses principles of probability and statistics; the "laws" it establishes are those of chance.

From atoms and molecules, to life's evolution, to human nature and human society and even the existence and properties of the physical universe, science's insight into nature mirrors lessons learned from roulette wheels and flipping coins. Most important (and least understood) of all, science's methods of making discoveries rely (almost blindly) on statistical reasoning, which makes policy making based on scientific studies a very risky business.

Terry's Takes

|

A Brief History of Chance

Although games of chance were known from antiquity, early modern scientists like Galileo and Newton never used it in their science.

Statistics

as part of science began when unexpected patterns were noticed in census data – for instance, the annual number of suicides in France was 'about' 42 – sometimes a few more, sometimes a few less (forming a 'normal distribution'). Adolphe Quetelet (1796–1874) developed an influential theory of Social Physics. Sir Francis Galton (1822–1911)(Darwin's cousin) brought statistics into biology obsessively measuring normal distributions – chance variations – of all sorts of characteriscs in biological populations (sizes, shapes, etc). Darwin's introduction of of 'random variation' as operative in biological evolution (random mutation) was strongly influenced by the accumulated evidence of Galton and cohorts.

The Theory of Observational Error dominated thinking about 'uncertainty' and 'apparent' chance-phenomena well into the 20th century. Darwin, for instance, denied that he meant that there was 'real' randomness in evolution – which would have directly conflicted with the enormously successful classical causality of Newtoninan Mechanics. Darwin suggested that it just 'appeared' to be chance-governed because we couldn't observe in enough detail.

The common assumption of modern science was that with improved and more detailed observations the 'apparent' chance-governed phenomena would be resolved into classical causal relationships. Chance wasn't 'real'. Uncertainty, at least in principle, could be completey eliminated.

With quantum theory and observations such as radioactive decay physicists were forced to accept that chance was real. Indeed, the current textbook consensus is that at the most fundamental level the 'stuff of the universe' – the substratum of reality – is chance-governed. This presents the modern problem of accounting for the 'appearance' of regular classical causality (see decoherence – although I think this works only in limited circumstances).

Evolutionary biologist Stephen Jay Gould, in his book Wonderful Life, argued that 'random variation' was an essential component of Darwinian evolutionary processes. Gould asked, if we went back 3.7 billion years on Earth and re-ran the tape of biological evolution, whether we had any reason to expect that same outcome -or even anything remotely similar. Gould's answer was that there was no reason. The Theory allowed almost anything. For instance, there was nothing in teh Darwinian model that would have expected multi-cellular life.

Cosmologist John Barrow, in his book The Constants of Nature, explicitly referring to Gould's question asked, if we went back 15 billion years and re-ran the time-evolution of the cosmos from the Big Bang what reason do we have to expect the same outcome. Barrow's answer was that there was no reason. Colleague cosmologist Brian Greene, in his book The Fabric of the Cosmos, concludes that 'scientists (being judged on the success of their theories to predict) are now in the worse possible position imaginable since we are unable to predict anything'.

Another way to say this is that if science is unable to predict the time-evolution of a system (Earth or universe) then whatever the 'outcome' of the time-evolution – in other words, 'the world as it is NOW' – cannot be explained scientifically even in principle.

|

Learning Objectives

Once you complete a reasonable exploration of the clues and questions raised in this Study Guide and having attended the presentation October 7th by Tom Siegfried you will be prepared:

1. To raise intelligent questions about the use of statistics in science.

2. To raise intelligent questions about statitsical inferences in medicine, economics and politics.

3. To begin to understand the implications of 'chance being real'.

4. To join the 21st century search for a post-scientific theory of the universe and our place in it. |

Glossary

Classical Causality: A specific event A regularly and predictably causes a specific state of affairs B.

Chance-governed Causality: A specific event Q regularly and predictably does NOT cause a specific state of affairs R. When the possible outcomes are limited – as with the heads/tails of a fair coin flip or the six sides in the role of a fair die – then there is a pattern in the ensemble of events (eg 50/50 heads/tails).

Each of the Theories of Probability has its limitations, its proponents, and its detractors.

The Frequency Theory of Probability says 'the probability of an event' is (means) the limit of the percentage of times that the event occurs in repeated, independent trials under essentially the same circumstances. (For instance, the limit of repeated fair coin flip is 50/50.)

The Subjective Theory of Probability says 'the probability' is a number that measures how strongly we believe an event will occur. The number is on a scale of 0% to 100%, with 0% indicating that we are completely sure it won't occur, and 100% indicating that we are completely sure that it will occur.

Statistical significance: The significance level of an hypothesis test is the chance that the test erroneously rejects the null hypothesis when the null hypothesis is true.

(For instance, the null hypothesis might be that this drug doesn't improve a specifc medical condition.) |